The benefits of 3D hand-eye calibration

In version 1.6 of the Zivid SDK, we released a new feature, the 3D hand-eye calibration API.

That might trigger questions like:

- What is hand-eye calibration?

- Does my automation task need hand-eye calibration?

- What benefits do I get from Zivid's 3D hand-eye calibration API?

Want to go more in-depth? Watch our Tutorial Series about Hand-Eye Calibration:

The concept of hand-eye calibration.

Even if you don't think about it, you use hand-eye calibration every day. All the tasks you solve with your hands, from picking objects of all textures and sizes to delicate dexterity tasks, like for instance sewing, involves that your hands and eyes are correctly calibrated.

Four main contributors are enabling us to master such tasks:

- Your eyes. Our vision is capable of capturing high-resolution, wide dynamic range images in an extreme working distance, with color and depth perception on virtually any object.

- Your brain. Our brain is incredibly good at quickly processing large amounts of data, and performing stereo matching of the images captured by our eyes. (By the way, this algorithm is orders of magnitude better than any computer algorithms currently available.)

- Your arms and hands. Capable of moving effortlessly and gripping objects correctly in our surroundings.

- Hand-eye calibration. From when we were kids, our brain has used trial and error, experiences, and knowledge to create a perfect calibration between where our eyes, arms, and body are related to each other.

So, what can happen if our hand-eye calibration is wrong?

In this video from Good Mythical Morning, they compete in performing a simple task of pouring water into a bottle, using glasses that flip-the-world upside down.

As you can see, they are struggling to fill the bottle because they need to recalibrate years of hand-eye calibration on the fly. Their hand-eye calibration is not working anymore because their eyes and arms no longer are collaborating in the same coordinate system.

We now understand that when you pick up an object:

- Our eyes capture images of the object.

- Our brain processes these images, finds the object, and tells our arms and hands where to go and how to pick up the object.

- The eye-to-hand calibration makes it possible.

This brings us to what hand-eye calibration means for robot applications.

Hand-eye calibration for robot applications.

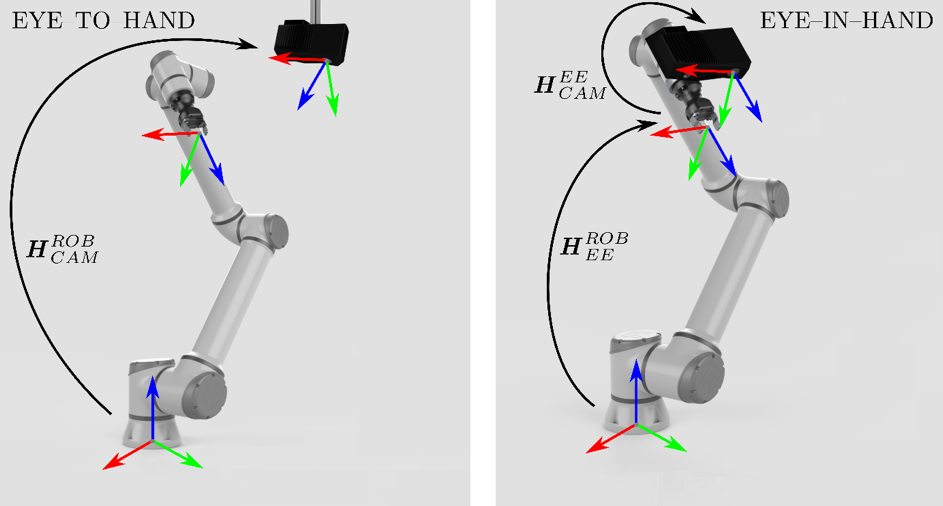

There are 2 branches of hand-eye calibration, both supported in Zivid’s hand-eye calibration API.

- Eye-to-hand. The camera is mounted stationary next to a robot. In this case we need to find the transformation from the camera’s coordinate system to the coordinate system of the robot base. This means that anything found in camera coordinates can easily be transformed into the robot’s system:

- Eye-in-hand. The camera is mounted on the robot arm itself. In this case we need to find the transformation from the robot end-effector (the robot tool point) to the camera. Since the robot at all times knows its end-effector location compared to the robot coordinate system, we achieve the necessary transformation:

Why do I need hand-eye application for my automation task?

In many applications, especially robot driven, hand-eye calibration is a must. Not only does it simplify the integration drastically, but it will often increase the accuracy of the application.

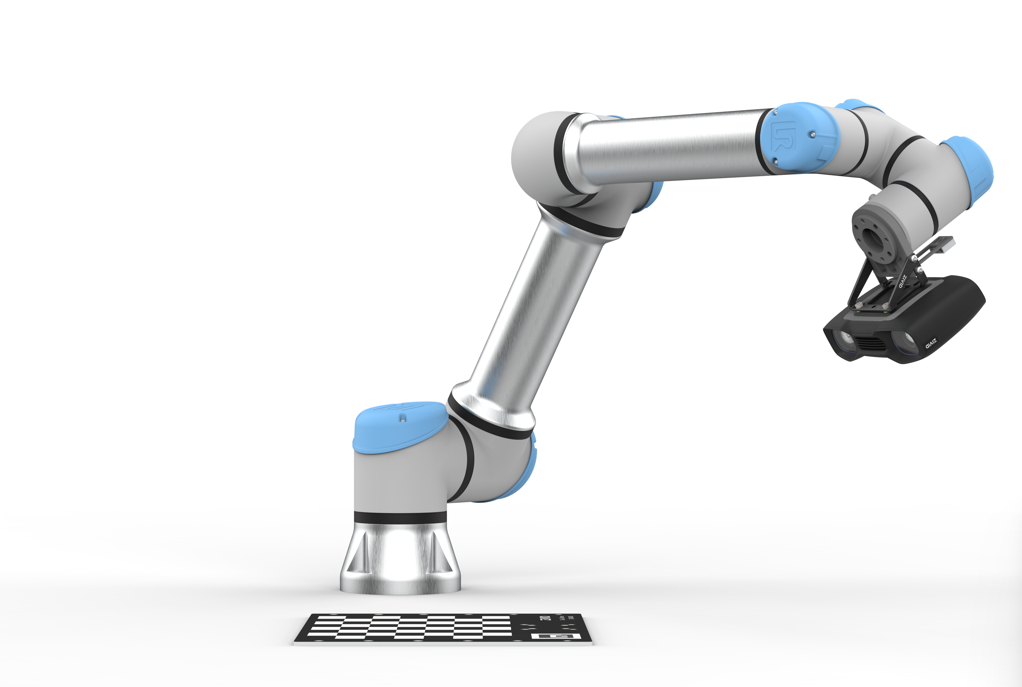

Assume you have placed your target objects in a bin, with a Zivid 3D camera mounted over it, and your robot with a gripper next to the bin. After performing an eye-to-hand calibration, a 3D bin-picking sequence could look like this:

- The Zivid One+ camera grabs a 3D image of the scene. The output is a high precision point cloud with 1:1 corresponding color values.

- Run a detection algorithm on the point cloud to find the pose of the desired object (this is typically made up of a pick position + pick orientation).

- Use the eye-to-hand calibration to transform the pick pose to the robot’s coordinate system.

- The application can now move the robot's gripper to the correct pose and pick the object.

Hand-eye calibration is the binding between the robot and the camera, which makes it easy to understand why having an accurate hand-eye calibration is essential to solving the automation task. With a high-accuracy 3D camera, you only need a snapshot to know the target object’s position in space before successfully picking it.

Benefits of using Zivid’s 3D hand-eye calibration.

A hand-eye calibration is a minimizing scheme using robot- and corresponding camera poses. Robot poses are read directly from the robot, while camera poses are calculated from the camera image.

A common way to perform this calibration originates from 2D cameras, using 2D pose estimation. Simplified, you capture images of a known calibration object, calibrate the camera, and estimate 2D to 3D poses.

Anyone that tried camera calibration knows that this is hard to get right:

- Use a good calibration object, like for example a checkerboard. This means very accurate corner-to-corner distances and flatness.

- Take well exposed images of your calibration object at different distances and angles (the calibration volume). Spreading out the images in the calibration volume and capturing both center and edges of your camera frame is key to achieving a good calibration.

While neither step is trivial, step two is especially challenging. Even the most experienced camera calibration experts will agree. At Zivid we factory calibrate every single 3D camera, and have dedicated thousands of engineering hours to ensure accurate camera calibration.

So the question is, should you recalibrate the 2D camera and estimate 2D to 3D poses when your 3D camera already provides highly accurate point clouds?

Well, the short answer is: probably not.

Zivid’s hand-eye calibration API uses the factory calibrated point cloud to calculate the hand-eye calibration. Not only does this yield a better result, it does so with fewer positions. And more importantly, the result is repeatable and easy to obtain.

The following graphs show typical rotational- and translation errors as a function of the number of images used per calibration.

As you can see, a typical calibration improvement is 3x enhancement in translation error and 5x in rotational error.

What’s next?

Regardless of your prior knowledge to hand-eye calibration, we hope you found this topic interesting. As you see, hand-eye calibration is one of the essential factors when working with 3D vision and robots. Zivid’s 3D hand-eye calibration makes the calibration process more accessible and reliable.

Want to know more? Watch the short video series we created to explain hand-eye calibration and how to optimize your method:

You May Also Like

These Related Stories

Zivid’s New Hand Eye GUI Makes Calibration and Verification Simpler

Achieve Optimal Hand-Eye Calibration for Enhanced Robotics Performance