The new 3D vision tools enabling bin-picking automation

Matthew Dale of Imaging & Machine Vision Europe, explores how 3D vision tools enable automated bin-picking in his latest feature article.

With 2020 as a backdrop, the trends are apparent; behavioral patterns shift towards online shopping, with additional demand on warehousing and logistics. As an example, Amazon alone is hiring over a hundred thousand extra staff to address e-commerce demand.

In his article, Dale and I discuss how the vision industry answers to this challenge by enabling more flexible picking solutions to handle more objects.

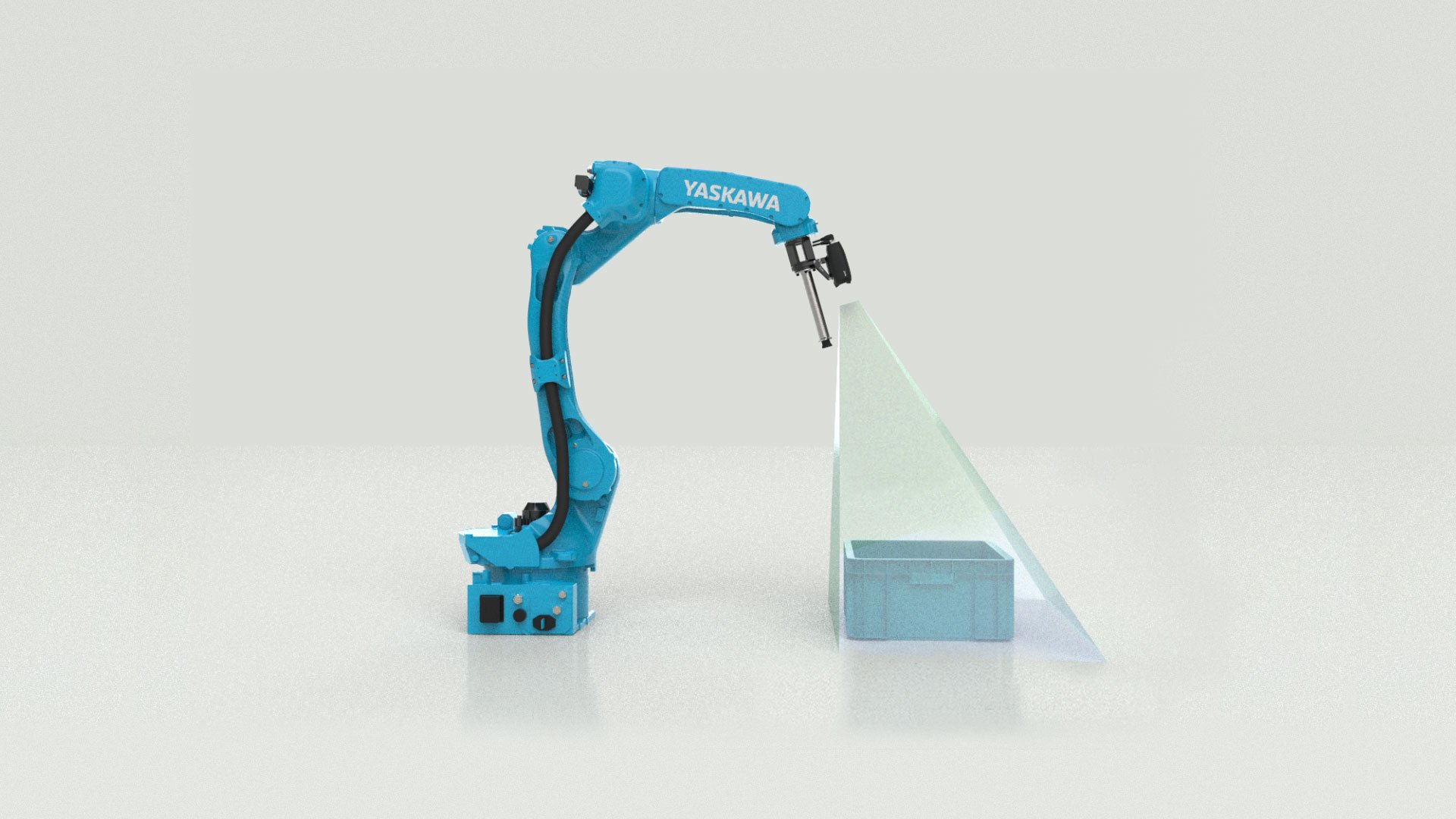

The ideal bin-picking solution will be able to detect, pick and place pieces that are densely packed, complexly arranged, hard to separate or have shiny and reflective surfaces, all while being faster than a human.

3D vision is typically considered as a component only for the detection of parts or SKUs in a bin. But they play a vital role in successfully picking and placing the objects as well.

Trueness describes how true your image is to the reality you are representing

"Some of our most prominent customers have come to us saying that despite being able to clearly see the objects in their bins using an incumbent 3D sensor, they are not able to pick them every time. This is because the point-cloud images are not true: there can be scaling errors, where objects appear larger or smaller than they actually are; rotation errors; or translation errors, where objects appear to be in a different orientation or position than they are in reality."

Trueness has great impact on picking and placing of parts, and the success your bin-picking or machine tending application.

What to read next → Unlocking the Full Potential of Bin Picking with the Right 3D Camera

Another challenge in random bin-picking, is that the point cloud quality from widely use sensors are not good enough.

"While smart detection algorithms and AI can recognize some objects, objects that aren’t as well-defined can not be confidently distinguished," Orheim explained. "These cameras are good for their price point, but from a bin picking perspective, we believe they are not quite up to scratch."

Zivid addresses these issues with our 3D cameras (Zivid Two, Zivid One+) – while also achieving high dimensional trueness – using structured white light to conduct 3D imaging.

"What sets our 3D cameras apart is how we use color, which comes into play when using AI," said Orheim. "Color is becoming a much more interesting quality of the point cloud, because it can be used by an AI or deep learning algorithm as an additional factor to distinguish between objects."

Over the past two years, Zivid has been developing artifact reduction technology, called ART, which improves the quality of the 3D data captured from reflective and shiny parts.

This solution is a combination of innovations in both hardware and software

Orheim said. "It means that customers now get all the benefits of structured light cameras when picking very challenging reflective parts in the bin."

Want to learn more about the new Stripe vision engine and ART for picking shiny and reflective parts? You can read more about Stripe here.

For a complete overview of the challenges in bin picking and how to solve them with 3D vision, get our latest ebook:

You May Also Like

These Related Stories

SECMA improves bin-picking with 3D vision

The best way to mount your 3D machine vision sensor