I interned as a software engineer for Zivid this summer. It has been an invaluable experience for me to work so close with such a skillful and dedicated team on a daily basis. The best part might be the level of trust the whole team put on me – even if I was “just” an intern – by always challenging and motivating me, as well as putting a lot of responsibility on me. I instantly felt right at home as an engineer on the team!

My task for this summer was to port the Zivid SDK to ARM. As of today (the date of this post), the SDK is only officially supporting machines x86_64-based processors in it. However, in recent times, with the advent of devices with powerful computing capabilities in the palm of our hand, one particular term of note that we hear a lot is ARM.

ARM Processor, source: https://www.arm.com/

ARM Processor, source: https://www.arm.com/

ARM processors with a reduced instruction set computing (RISC) architecture typically require fewer transistors than those with a complex instruction set computing (CISC) architecture. For example, the x86-based processors from manufacturers such as Intel, AMD, etc., found in most personal computers. This results in improved cost, power consumption, and heat dissipation. These characteristics are desirable for light, portable, battery-powered devices – including smartphones and tablet computers, and other embedded systems.

Despite the overwhelming advantage of ARM in mobile and embedded devices, there has recently been a change in this trend and a more well-received influx of ARM-based processors for PCs. For instance, already in 2017, Qualcomm and Microsoft announced the first Windows 10 devices with ARM-based processors. Furthermore, Apple has revealed this year a transitioning over to ARM-based Macbooks. Consequently, in order to properly respond and be prepared for this already-increasing demand, we have an important motivational factor for why we should (and are) porting our SDK to ARM.

How?

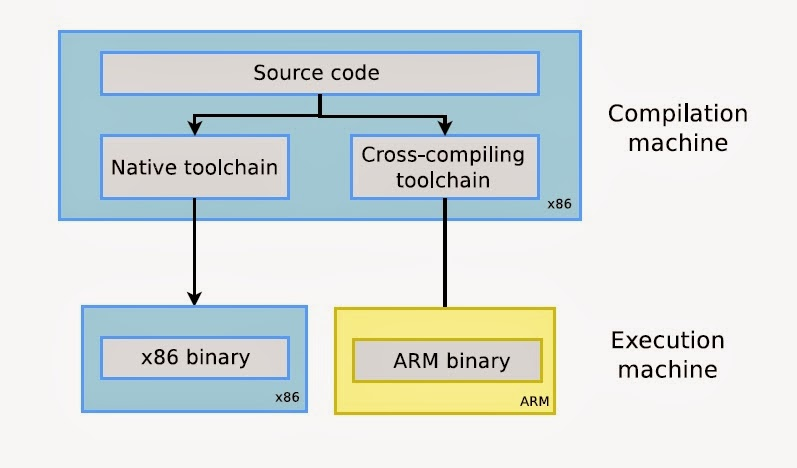

The first keyword for porting the Zivid SDK, in a manner that wouldn’t significantly slow down the testing pipeline, is cross-compilation. Cross-compilation is the act of compiling code for one computer system (often known as the target) on a different system, called the host. The image below summarizes this process quite well:

Cross-compilation process

Cross-compilation process

Wouldn’t it be simpler to just do the compilation natively on an ARM-based computer? Indeed, that would simplify both the building and testing of the code. However, as we only had an embedded system (SOC) device for disposal – à la RockPro64 (which is rather powerful, compared to other SOCs!) – building the SDK would be almost an order of magnitude slower than it would in a “normal” development machine. Cross-compiling, in contrast, was often as quick as doing native compilation on a development machine. Using cross toolchains, such as the Linaro toolchain, was a lifesaver. One takeaway from this process is to be careful with premature optimizations, especially if you are targeting builds for multiple architectures. Some optimizations are often architecture-specific. One example is of vectorizing the code with AVX and/or SIMD instructions – ARM uses their instruction set architecture (ISA), called Arm NEON, for that. Of course, it all depends on the use-case, but try not to forget those modern compilers are very smart and generally generate very efficient code.

The second keyword for porting the SDK in a robust and reproducible manner is through the virtualization of the builds. Using tools, such as Docker, was essential for me to create reproducible builds. Containerizing and virtualizing the builds and setups gave an additional benefit of it working as documentation for how one e.g., builds certain dependencies. This can be really convenient if a package manager is not used – in that case, the dependencies need to be recompiled for the new architecture. The worst case of not using a package manager is that you will end up in a big mess of dependency cross-compilation, as you would have to recompile the dependencies of every dependency, and then the dependencies of those dependencies, and so on…

Finally, assuming the code successfully cross-compiles, the last step is to test it. However, using the weaker SoC ARM boards as testing devices in our testing pipeline wasn’t an attractive choice. The main reason to avoid this was the amount of computation the SOC device could perform, compared to the other testing agents which would boast powerful server CPUs. Another reason to avoid using SOC as testing agents would be the potential hardware problems that could occur on them, as we quickly could break the storage, through frequent read/writes, on the device since it used SD card as a storage medium. On the contrary, we found that emulating an ARM environment, using QEMU, worked quite well. Naturally, it still didn’t run as fast as running an x86_64 binary natively, but it did save us from using new and unknown machines as testing agents. Therefore, combining the use of the emulation features in QEMU with the containerization in Docker resulted us in a simple, reproducible, and platform-independent way of testing the SDK.

Conclusion

After the SDK code has gone through all these steps, we end up with a multi-architecture port of the SDK that is appropriately tested – without the need to complicate our internal testing pipeline with weaker embedded devices!

I hope that this short post – albeit not going too much into detail – demonstrated that cross-building is something that potentially could be introduced to a developer’s building and testing pipeline, with very little effort. I guess the takeaway, in spirit, is to aim for reinventing the wheel as low as possible, and instead use the tools that are at your disposal. This is what we saw made our lives simpler, by e.g., letting the cross-compiler handle the general optimizations for us, and letting Docker and QEMU virtualize and emulate a testing and building environment for us.

Finally, I hope I was able to convey the fun, diverse, and challenging tasks I was allowed to work on this summer at Zivid.

You May Also Like

These Related Stories

Showcasing Zivid's Industrial 3D Camera Solutions at the Korea Vision Show (AW2025)

SDK 2.17: Full Zivid 3 Support and New Barcode Reader