In this blog post, we will discover why collaborative robots (cobots) need color point clouds, three common use cases, and how you can start capturing point clouds yourself.

ABB's cobot - YuMi. Source: abb.com

ABB's cobot - YuMi. Source: abb.com

Cobots and Point Clouds

Today cobots are used in automated manufacturing, pick and place, and quality inspection applications, and they perform tasks that are too laborious, monotonous, or sometimes even too dangerous for human workers (Source: Know your machine: Industrial robots vs. cobots).

By pairing the cobot capabilities with 3D sensors, tasks such as detection, inspection, and processing of random objects become easier and more accurate. With the addition of sensing in three dimensions, cobot applications can also work more efficiently alongside humans. 3D cameras, the sensor equivalent to our own eyes, provide the information needed as point clouds.

Point cloud example - PCB parts

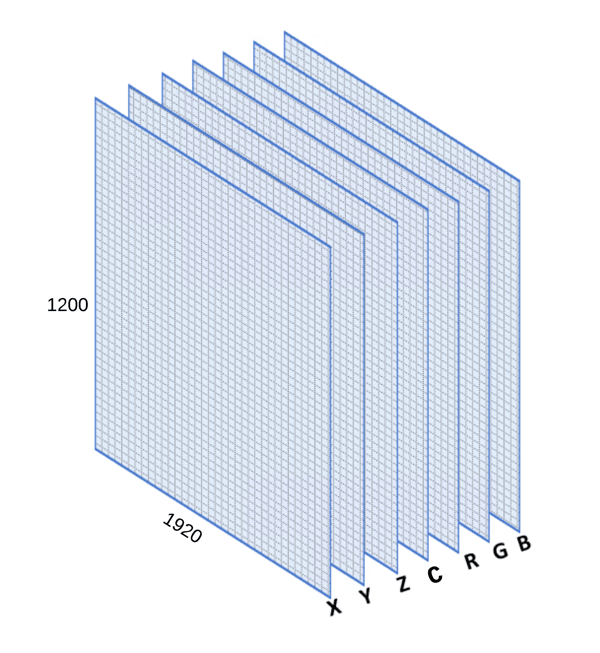

A point cloud consists of data points (pixels), each containing a minimum of 3-dimensions (X, Y, Z) relative to the sensor (the 3D camera). This provides the space and depth information. When talking about high quality point clouds, we want as much data as possible (“density” of pixels with data), with good signal-to-noise ratio. High quality point clouds help the cobots to maneuver in space and for instance grasp target objects better.

The quality of a point cloud data varies based on the characteristic of target object and background. Therefore it is important for developers to choose the right 3D camera to optimize point cloud results and eliminate disturbances by customizing 3D camera's settings.

Why Color Point Clouds?

Zivid One+ 3D camera enables separating objects with similar shape

Your typical 3D sensors are capable of producing different resolution grayscale or monochrome point clouds. This makes it close to impossible to for instance separate similarly shaped objects without adding color information to the mix. For 3D sensors, this is typically done by paying for an additional 2D color (RGB) camera, and then calibrate and map this color information with the XYZ data.

Zivid's industrial grade 3D cameras use the same sensor to capture 3D point cloud and RGB data. This means no additional color 2D sensor or XYZ and RGB matching needed. The Zivid One+ color cameras have a 2.3 MPixels (1920 x 1200) resolution sensor. It gives you up to 2.3 million pixels, each with X, Y, Z, R, G, B, C (Contrast value) information for every 3D point cloud image you capture.

The high resolution, 1:1 mapping between 2D and 3D color information provides a significant value to robot vision applications as it not only increases the possibility to separate similarly shaped objects, but it also improves the general performance of cobots to process fine detail of an object. (Read more about Point Cloud in Zivid Academy)

Three Use Cases

The following use cases show how cobots can benefit from high-quality point clouds.

1. Tiny Objects

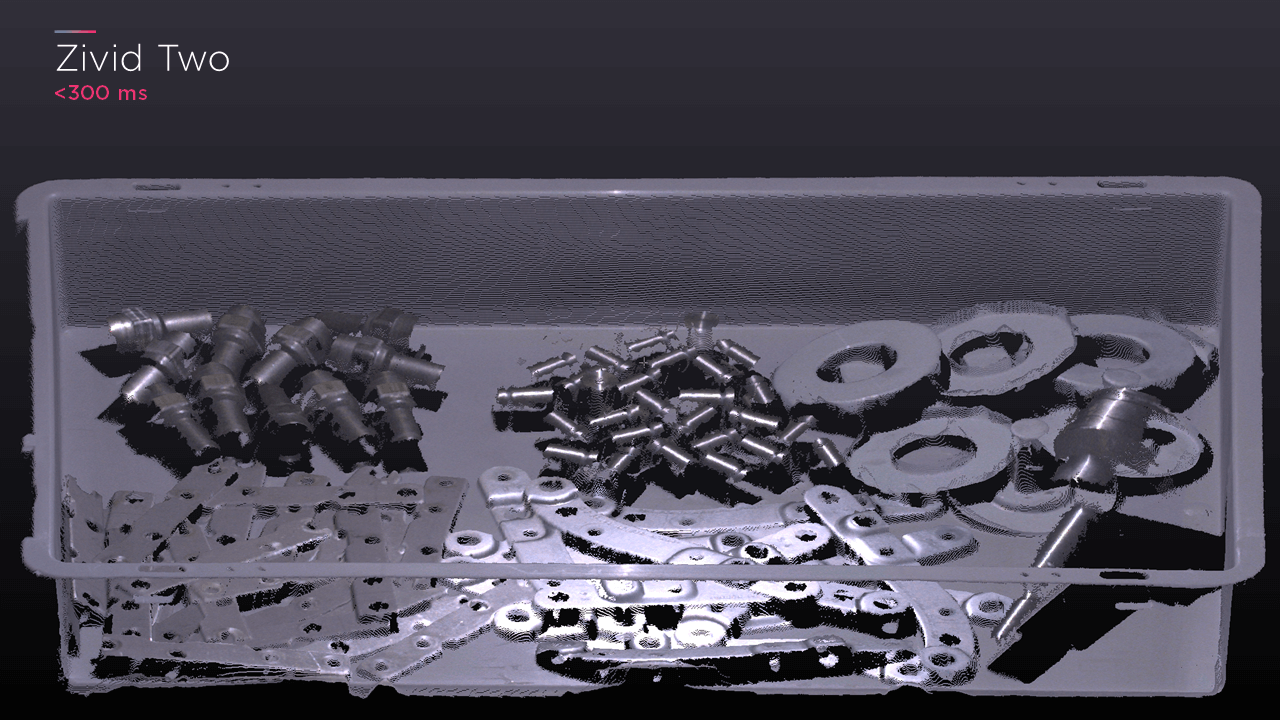

Random bin-picking is one of the most common cobot applications, and requires that the system can detect, classify, localize, and pick different parts. High-resolution robot vision becomes especially important in applications where smaller parts are used. With high quality point clouds, the cobot solution can separate and find small objects with µm level of accuracy.

Point Cloud example - Mix of screws and nuts

2. Challenging Objects – the “Uglies”

Objects like absorbing or shiny parts can be challenging to capture and get hiqh-qulity point clouds of. By using a 3D HDR (high dynamic range) feature when capturing point clouds, objects that were traditionally considered challenging can now even be in the same target setup and provide great results.

Comparison between Zivid One+ full dynamic range color point cloud and a limited dynamic range 3D camera output

In the example above, the Zivid One+ camera captured the black + absorbing cloth with clear boundary lines while the other 3D output using limited dynamic range barely identified the object. The shiny metal part was also represented correctly with the high dynamic range when the other 3D output displayed the black bar as a result of reflection.

Point cloud example – shiny parts

3. Industrial Environment

In general, the quality of point clouds varies depending on work environments such as working distance, lighting, temperature, dust etc. To handle a wide range of conditions, a flexible, industrial-grade 3D camera system is required. The IP65 certified Zivid One+ camera ensures operation in humid and dusty work environments.

With its SDK, developers can easily adjust point cloud settings to adapt to a unique work environment, from food handling to palletizing. Ensuring a reliable operation regardless of surroundings is critical for industries that run on a 24/7 basis. The video is an example of how industrial robots with 3D vision contribute to improvements in the food industry.

Random picking of food by Sheff Foodpicker

How to Capture Point Clouds

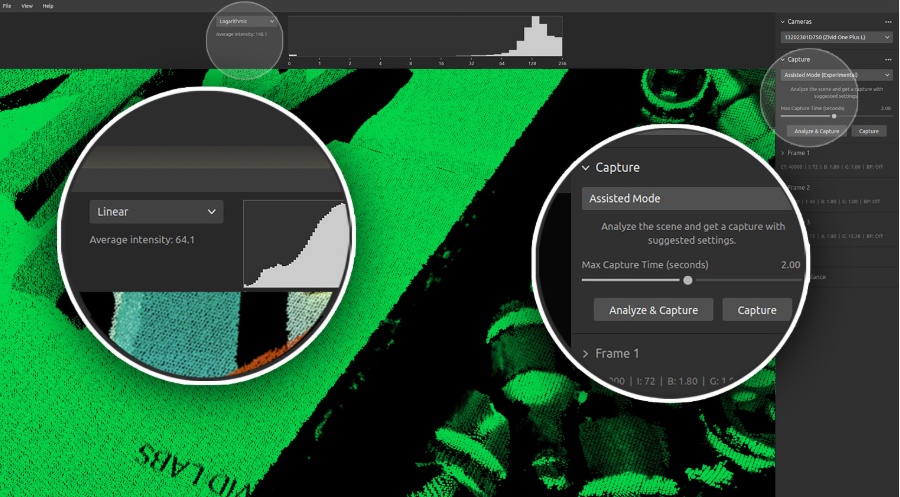

To capture 3D point clouds yourself, you need a 3D camera (hardware), capturing software and a point cloud viewer (software), and your target object. Zivid Studio is a free point cloud software tool provided with the Zivid SDK. Zivid Studio is essential to capture, analyse, and evaluate point cloud results. It also gives the flexibility to visualize the output as 3D, 2D and depth maps to provide more information. If you use Zivid Studio, there are two ways to capture point clouds.

Capture Assistant Mode

Like any digital camera, you can capture 3D images using an automatic mode called Capture Assistant. Capture Assistant analyses the scene and delivers optimal results for you with suggested settings. This is a good alternative if you don’t want to customize a frame manually.

Manual Mode

To optimize the capture for your specific use case, you can use the manual mode in Zivid Studio. You can test different settings by using the controls for exposure time, Iris/aperture, brightness, and gain values, in addition to global filters such as contrast, outlier, and reflection. (Read more about Control Panel in Zivid Academy)

Evolving Software

Zivid’s SDK is available to help you build your own 3D vision application. Our SDK includes built-in tools and examples to help developers reduce the time it takes to go to market. The SDK is updated every other month to continuously improve the performance of the Zivid One+ 3D cameras and introduce new features based our customer’s feedback.

What’s Next?

3D vision allows developers to build advanced cobot systems and enable more effective and reliable collaboration between robots and humans. Here are three quick steps on how to get started with 3D vision cobots.

- Check out existing point cloud examples to find relevant use cases for you. (Check out our Point Cloud Image Library)

- Schedule a demo meeting with us to discuss your requirements and get a project estimate

- Order a 3D camera to test out point clouds yourself (Order a Zivid One+ 3D Camera)

If you have any questions about 3D vision for your cobots, contact us or leave your questions in the comments below.

You May Also Like

These Related Stories

Impact of 3D vision in vision-guided robotic applications

Zivid SDK and Zivid Studio v1.5