A beginner's guide to 3D machine vision cameras

What is a 3D vision camera? What considerations need to be made to choose the right 3D camera? How does it work once you get it? This guide is created to help you answer the questions and provide steps necessary to build your application with 3D vision technology.

What is a 3D machine vision camera?

Search for the term 3D cameras on, for instance, Amazon, and chances are you will see a big list of 360-degree consumer cameras for video and VR experiences. For industrial use, though, 3D cameras refer to a machine vision sensor specifically designed to present the environment with accurate depth information in three dimensions. We won't cover lidars or cameras used in vehicles here, but rather focus on the category of 3D cameras often integrated into manufacturing processing, production lines, or together with robots and industrial applications.

Why do we need a 3D camera for industrial automation applications?

In the last few decades, 2D cameras have been widely used for applications such as barcode reading, object tracking and presence detection. The need for using 3D cameras started to grow as some target objects require µm level of point precision in conjunction with the depth information. Additionally, the 3D technology allows us to manage objects like shiny parts or challenging environments where lighting is limited or excessive. (Read this article for more information: Why 3D machine vision? What's wrong with 2D machine vision?)

As the terms indicate, 3D cameras and machine vision technology play a vital role as eyes for machines. Similar to a human, the information from the eyes can then be processed by the brain (the computer). 3D cameras provide a snapshot of the world and provide the necessary depth information for machine vision algorithms. This is used to identify the size of an object, the color, and the distance between two points, just like we do with our own eyes.

The purpose of the 3D machine vision system is to enable an application to perform specific tasks faster, smarter, and more accurate. When seen from a specific distance, large objects are easier to detect and recognize, while small objects require higher quality point clouds from the sensor. 3D machine vision cameras are typically used in bin-picking, logistics, and inspection applications. As more manufacturing industries adopt robots and automation, the 3D machine vision market is expected to grow continuously in the future.

A 3D camera enabled bin-picking example

If you take an application like bin-picking of lots of small, randomly placed objects, a complete machine vision system typically requires a high-performance 3D camera to image the targets in the bin correctly. In this system, the computer can process the point cloud from the 3D camera to find accurate information like the next gripping point. The 3D cameras are often mounted stationary above the target. For flexibility, more and more cameras are attached on or near the gripper on a robot.

What are the key considerations when choosing a 3D machine vision camera?

The following aspects need to be considered when you compare 3D machine vision cameras from different vendors.

Type of Application

To choose the right 3D camera, it is critical to understand how your application works and what you want to achieve by adding the 3D vision to your automation system. A bin-picking system requires high accuracy from the 3D camera as it needs to identify small parts in batches. In the logistics industry, accuracy might not be as critical, but the automated pick-and-place solution must handle packages 24/7. A 3D camera can also be adopted in the quality control process to verify production and detect errors fast and reliably. (Read this example: Improving wheel alignment with Zivid 3D cameras) If you have for instance an application that needs to separate similar types of groceries, color detection should be a requirement, and you need a 3D machine vision camera with native RGB colors.

Once you have a clear goal about using 3D machine vision technology to your application, you can start listing your requirements and decide what to prioritize. Which element matters most to you? Is it resolution, processing speed, field of view, or the ability to handle various materials?

As every use case is unique, a 3D machine vision camera that works for others may not work for you. Having your own checklist will save your time and effort for comparing different 3D camera solutions.

Accuracy

To compare the accuracy between 3D cameras, you need to understand the definition of different terms that are used to measure accuracy.

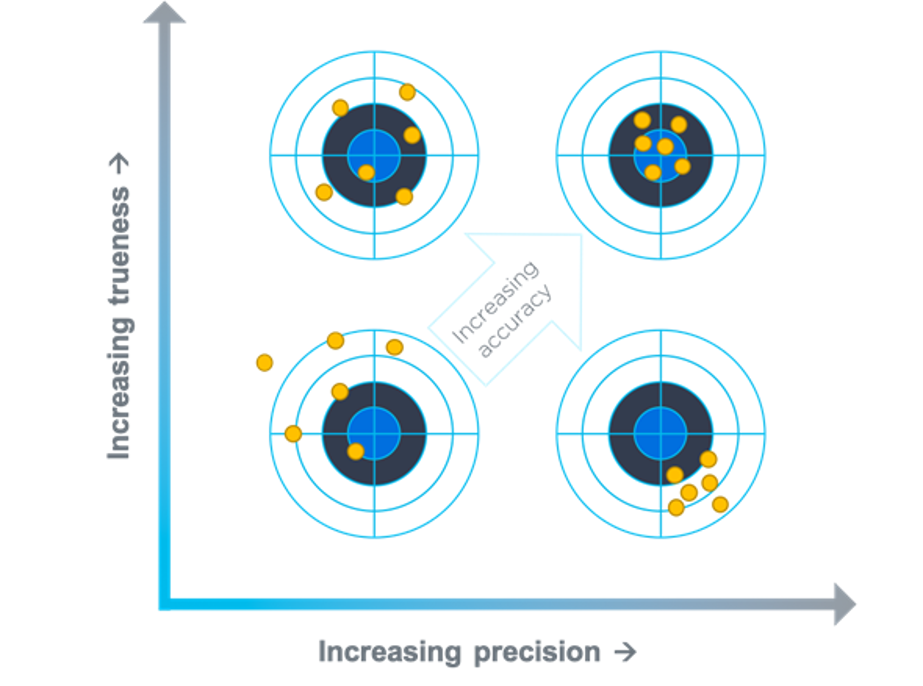

According to ISO 5725, the definition of accuracy is the combination for precision and trueness:

- Precision: Describing random errors, a measure of statistical variability.

- Trueness: Describing systematic errors, a measure of statistical bias.

- Accuracy: Describing the combination of random and systematic errors. Sum of Precision and Trueness.

Figure 1. ISO 5725

As you can see in Figure 1, The accuracy should satisfy both precision and trueness factors. Keep in mind that the accuracy numbers in documents will vary depending on conditions such as working distance, ambient temperature, ambient light, and camera settings.

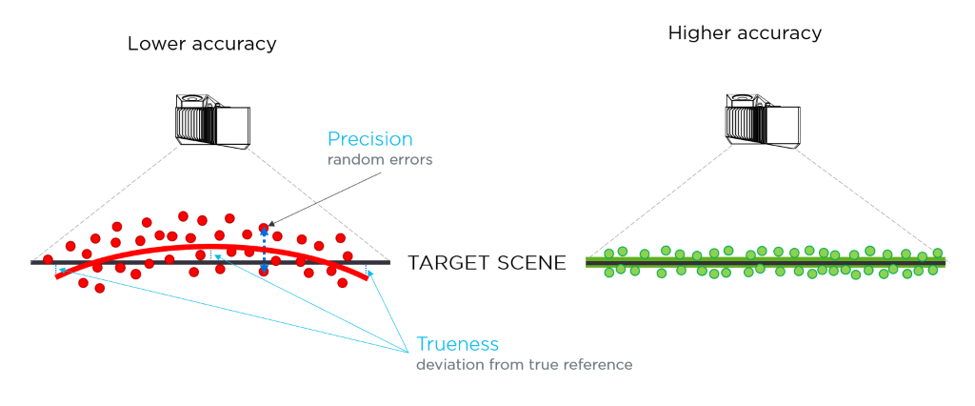

Figure 2. 3D Camera Accuracy Test

Ease of Use

There are three elements to make your development process more manageable: hardware, software, and documentation. From a hardware perspective, a 3D camera should be flexible enough to be installed on the robot, or stationary depending on your use case. In case of any twisting, bending, and pull force, the cable management needs to be designed for machine vision applications.

Machine vision software is an essential part of your application development and therefore, it needs to be tested before you choose your 3D camera. You may want to check if it is easy to set up, supports your programming language and have any built-in features for advanced image calibration and analysis. It is also recommended to read release notes to see bug fixes, new features, and the frequency of new releases.

Well-organized documentation will help you get started with your 3D camera and solve any issues quickly in the future. Scan through datasheets, training materials, examples and what kinds of support you can expect in the development phase.

Safety

If you are building a cobot (collaborative robot) application, keep in mind that your camera is eye-safe and less annoying for humans. A non-laser based camera can be advantageous as it prevents you from being exposed to laser light either from direct exposure or from reflections from shiny objects. Plus, you don't have to wear laser safety glasses or hire an officer to control the laser hazards.

How can I get started with a 3D camera?

Once you have ordered and received a 3D camera, you are likely to take the following steps.

1. Install a software package

A 3D camera usually comes with a machine vision software package. You can download it to your PC to view images in the point cloud environment.

2. Mount your 3D camera

You can decide where to set up your camera depending on your use case and working environment. Test different positions to remove reflections and get high-resolution results.

3. Connect the 3D camera to your PC

Though it sounds easy, it is vital that you use the correct cable and connect it to your PC properly. This is because a poor setup might cause connectivity issues from potential data transfer errors that could result in loss of connection or corrupted 3D images.

4. Capture 3D images

Once you complete the initial setup, explore built-in features and settings available in your machine vision software. Try different angles and filters to get the best result out of a 3D image. As an example, check out what you can expect from our Zivid Studio software tool in this 3-min video:

5. Start developing with SDK

To start working on your application, you need to use the SDK of the camera to customize settings and access to tools, utilities, libraries and application examples.

And what's Next?

We hope that this guide helps you find the right 3D machine vision camera and understand what to expect once you have received the camera. If you want to learn about our 3D camera offerings, please check out the resources below.

Sometimes, it's best to see how the camera works and ask questions in person. You can book an online demo meeting with one of our engineers to learn more about our 3D camera solution.

Or you can always leave a comment to ask questions.

You May Also Like

These Related Stories

Choosing a 3D Camera for Collaborative Robotics Solutions

SECMA improves bin-picking with 3D vision