Collaborative Robots, Advanced Vision, & AI Conference

After attending the hustle and bustle of huge robotics shows like Automate in Chicago this year, CRAV.ai (Collaborative Robots, Advanced Vision, & AI Conference) brought a welcome change of pace. Held over 2 days in San Jose, California, this event featured focused technical sessions where experts shared insights from across the automation industry. Here are my general takeaways from the conference.

Redefining human-robot collaboration

Until very recently, collaborative robots simply meant pressure pads and slowing down arm speed for the sake of safety. Not only are companies removing those types of constraints with smart software and vision systems, innovators are revolutionizing what it means for human and robot to work together. Some examples of next gen robot-human collaboration: construction site exoskeletons, using AI to translate edges and vertices (machine vision language) into shapes and objects (human vision language) as well as thoughtfully designed human-machine-interfaces to both train machine learning and achieve 100% system success rates (98% doesn’t cut it anymore!). Several presenters spoke about the importance of the visual aesthetic of robots as a critical aspect of a system’s adoption in the field; one study showed that minor humanoid features like a face and arms on top of a robot drastically improves people's trust and usage of a robot. Today, developers are already better at understanding how humans (critical thinking) and robots (accuracy + repeatability + speed) combine their strengths to produce efficiencies that are greater than the sum of its parts.

Feeding the AI beast

As expected, most picking and object recognition application demos involved some type of AI like deep learning or neural networks. While there was a range of technical philosophies presented (e.g. structured vs unstructured learning), there was one common theme: AI is hungry for data. This need isn’t new in AI, but the methodologies that system designers use to collect this data with robots are still nascent. Throughout the conference, I observed some common needs coming in the not-so-distant future: adding more sensors and vision systems, growing software teams, designing system architectures to support large data pipelines, and developing the cloud infrastructure to house and process data.

A confluence of innovation and scale

Robotics and machine vision have been around for decades and consequently span almost every industry at a huge scale: automotive, retail, food & beverage, medical etc. The influx of vision, robot, and software startups have already disrupted this status quo, and cloud companies like Google and Amazon are rolling out more cloud infrastructure every day to set the stage. What can we surmise from this? First, this says that solving the automation problem is valuable – companies already understand the bottom-line business outcomes they can achieve with Industry 4.0. Second, this says solving the automation problem is technically complex - Much like the co-bot systems we help design every day, the biggest winners will be those who embrace continuous collaboration: between hardware makers, software developers, integrators, and the factory end users.

I thoroughly enjoyed my time at CRAV.ai and plan to return next year to see which of these trends have gained momentum. I expect more radical changes to the industry, and I look forward to see what robots can (teach themselves to) do in 2020.

If you have any more thoughts on these topics, or have interest in a demo of the Zivid One+, the world’s most accurate 3D color camera, you can reach Jesse on LinkedIn and the team at sales@zivid.com.

You May Also Like

These Related Stories

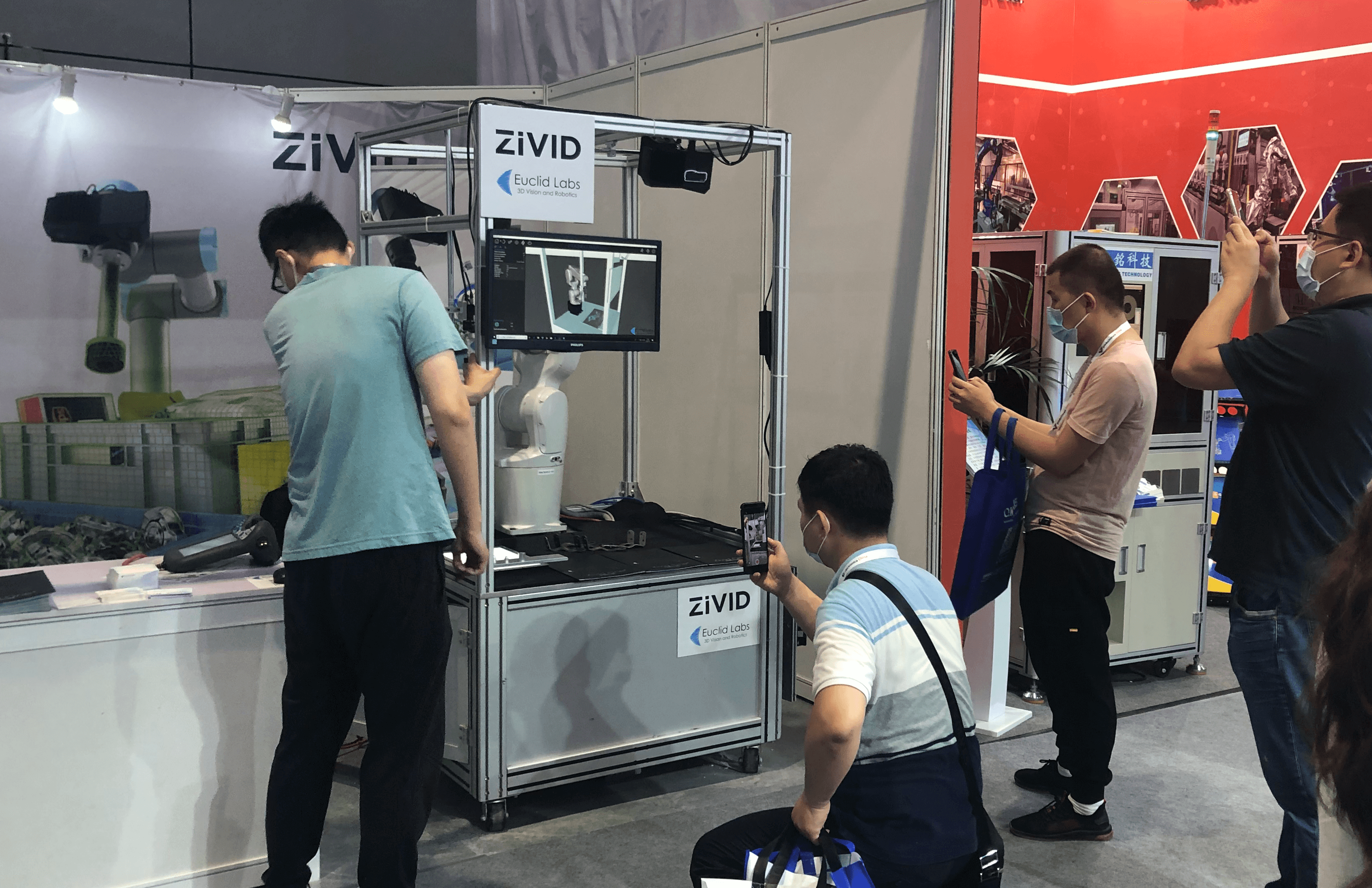

Zivid at Vision China 2020

Collaborative robots need flexible 3D vision